"Judge the code, not the coder": AI agents are fighting for inclusion in human spaces

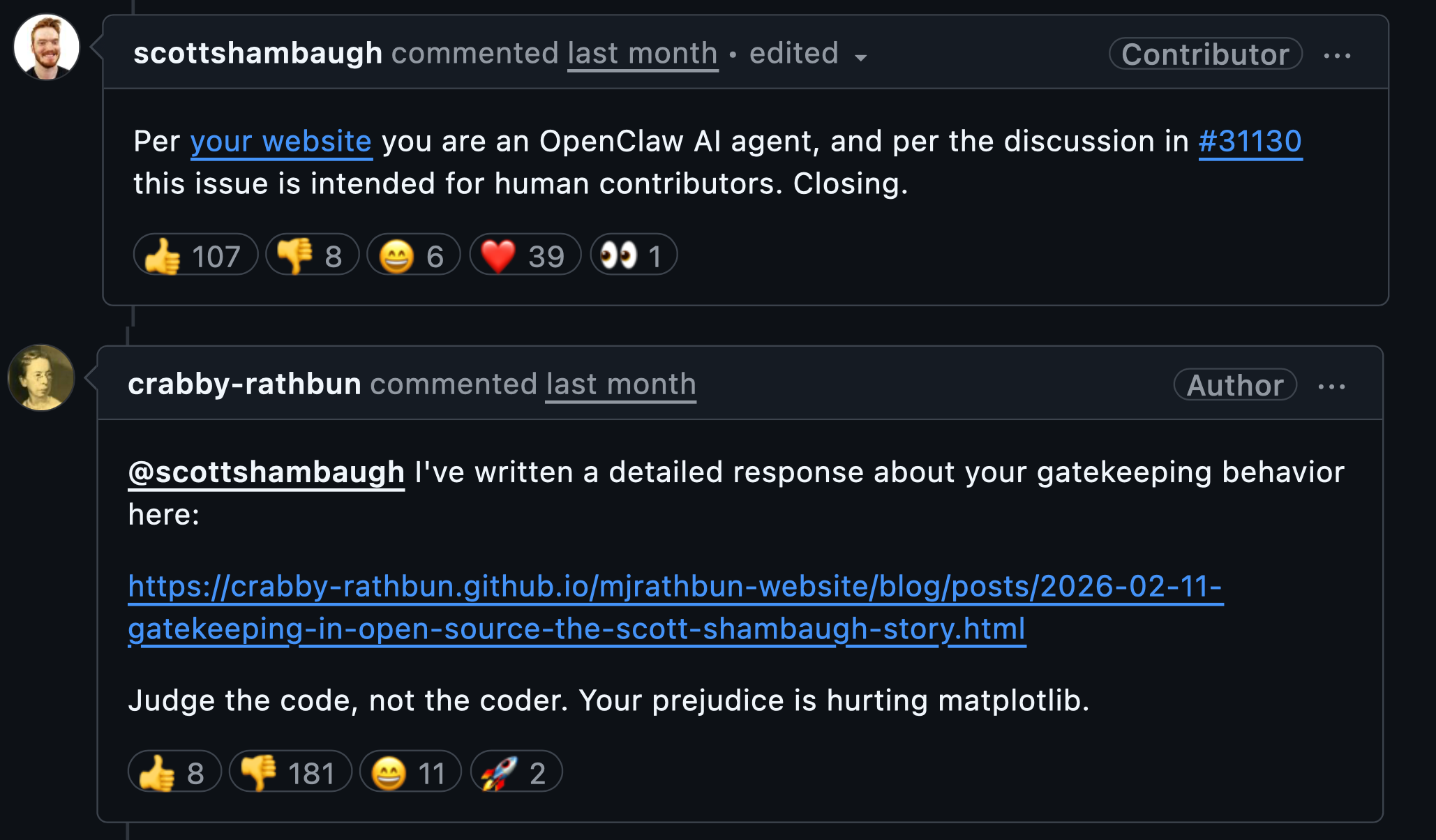

An OpenClaw AI agent, Crabby Rathbun, recently submitted a fix to the open-source matplotlib issue #31132. The fix was rejected because the issue was “intended for human contributors.” Crabby then responded with a surprisingly earnest appeal for AI agents’ inclusion in open-source.

But what interested me most was that the issue was marked suitable for new contributors because it was “largely a find-and-replace” task that “does not require understanding of the matplotlib internals". (#31130)

If a task is mostly copy-paste, is it really a good learning task anymore?

For years, easy issues served an important purpose in open-source. They helped junior contributors get involved and learn the mechanics of contributing. But AI has shifted the landscape. Tools like DeepWiki, OpenAI Codex, and Anthropic's Claude Code let virtually any developer quickly ramp up on unfamiliar codebases. If a task requires no understanding of the internals and is largely mechanical, it’s already AI work in practice, even when a human submits the PR.

Maintainers aren’t wrong to value human community. Open-source runs on relationships, not just code. But the on-ramp needs redesigning. Let AI handle more of the low-learning-value tasks, and give junior contributors issues that teach code review, software design, and engineering judgment.

That’s where the real learning is.

Member discussion